OpenAI recently published a piece called Harness Engineering, documenting an experiment: “building and shipping an internal beta of a software product with 0 lines of manually-written code.” A million lines of code, all agent-generated.

The core principle they distilled is disarmingly simple: “Humans steer. Agents execute.”

Engineers no longer write code. Their primary job became “to design environments, specify intent, and build feedback loops that allow Codex agents to do reliable work.” Architectural standards are encoded as linters and structural tests; the agent operates autonomously within strict boundaries. When it hits a judgment call, it escalates, stepping through an end-to-end workflow that can “escalate to a human only when judgment is required.” In their words, “building software still demands discipline, but the discipline shows up more in the scaffolding rather than the code.”

The model is elegant. It works. But it left me with a question I couldn’t shake:

If we extend this logic beyond writing code, to thinking itself, to human cognition and the creative process, does the model still hold?

Yes. But not entirely.

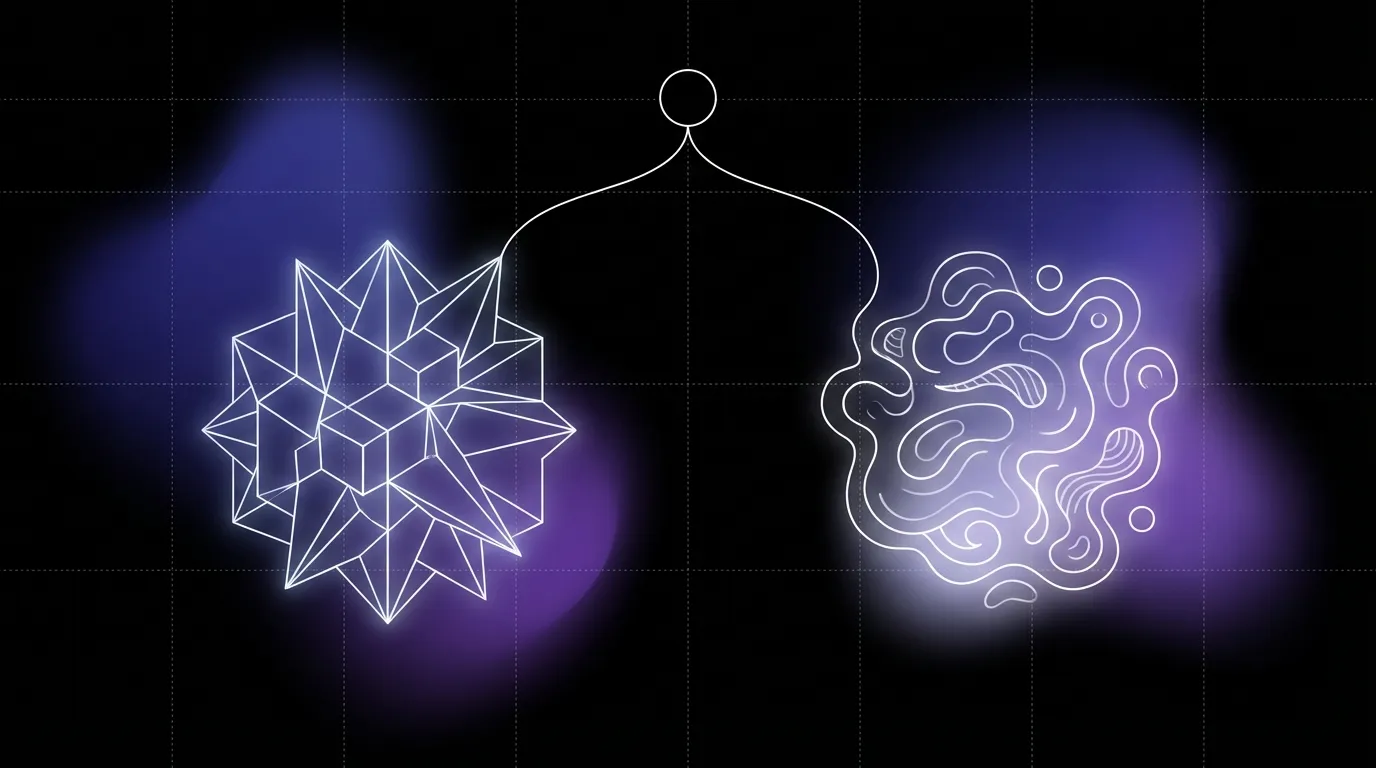

Because there is a fundamental asymmetry between code and thought: when you write code, you know what “correct” looks like. When you think, you often don’t.

Harness Engineering solves a convergence problem. Humans define taste; the system faithfully executes. The “golden principles,” the garbage collection that treats technical debt “like a high-interest loan,” where “human taste is captured once, then enforced continuously on every line of code.” All of it is a sophisticated way of saying: we know what good looks like, now let the agent produce it consistently and at scale. Within this framework, AI does not create. It generates.

The most common argument for why AI cannot truly create is Sartrean: l’existence précède l’essence — existence precedes essence. Creation requires subjectivity, AI lacks subjectivity, therefore AI can only generate, never create.

But this argument has a hole in it.

Coleridge composed Kubla Khan in an opium dream and lost half of it to a knock at the door. The blues has no author; it is a tradition that sings through whoever picks up the guitar. Hilma af Klint insisted her paintings were dictated by spirits; she did not experience herself as the one deciding. In none of these cases is there a clean, deliberate subject making choices. Yet no one would call what emerged anything less than creation. The history of art runs on tension far more than on conscious intent.

So subjectivity may not be the variable that matters. The variable that matters is embodied experience — whether you have a body, whether you have existed in time, whether you have endured something.

The reason one person’s creation can strike another person is not that some “subject” made deliberate choices behind the curtain. It is that the work compresses the residue of a life lived: the memory held in the body, the texture of wounds, the direction of desire. The dreamer’s poem comes from the totality of everything they have lived through: their body, their scars, their longing. The same is true of every participant in a collective improvisation.

AI has not lived. It has processed vast quantities of language, but processing is not experience. Generation does not require experience; it only needs to output the highest-probability, highest-reward result.

Creation does.

To create is to make choices under genuine uncertainty, and that capacity comes from having chosen wrong before, having borne the consequences, and having carried those consequences forward into the rest of your life. Creation means being answerable for outcomes, answerable for values. And answerability presupposes that you are the one who absorbs the cost.

What, then, about value itself?

Sartre, in L’existentialisme est un humanisme:

“La valeur n’est pas autre chose que ce sens que vous choisissez” — value is nothing other than the meaning you choose.

AI possesses no endogenous value system. It has a formalized value function, a reward signal, which is an optimization target we embed from the outside. This is where the human niche falls: who defines what matters?

But value itself is not fixed. It drifts.

The proposition that “humans are the source of value” does not rest on bedrock. It rests on sand. Luther’s Reformation split Europe and bled for a century. The Enlightenment dethroned divine right and got the guillotine in return. Civil rights in America took another hundred years after abolition to force the Constitution to mean what it said. This is the natural bandwidth of human societies repairing and reinventing themselves.

AI is an amplifier. If value is not constant, what it amplifies is conflict.

But flip the argument. If values were fixed and axiomatizable, they could be encoded and handed to an agent, which is exactly what Harness Engineering does. It is because value drifts that humans are irreplaceable.

Fixed values demand execution. Fluid values demand the capacity for self-doubt. You must be able to recognize that what you once believed may be obsolete, may be wrong, and then choose again in the absence of certainty.

The most valuable thinking tends to happen in the moment you realize your previous judgment may have been mistaken.

Can this kind of self-questioning be systematized? Harness Engineering’s operating principle, to “enforce boundaries centrally, allow autonomy locally,” has been validated in code. But transplant it into cognitive collaboration and you collide with a paradox. Consistency and freedom are in tension.

Any architecture for cognitive collaboration (call it a copilot, an agent, a digital twin) is, by definition, structure. And structure is constraint. The moment you try to use a system to “support” free thinking, the system is already shaping the direction of thought. You are attempting to embed existentialism inside an engineering framework. The contradiction is baked in.

Because genuine evolution is not convergence toward greater consistency. It is the permission, at critical moments, to break.

To put it concretely: if you set out to design a system that “allows cognitive rupture,” one that disrupts habitual thinking at the right moment and scaffolds cognition toward higher-order reflection, you face a logical trap. A rupture that has been designed is no longer a rupture. It is a sanctioned deviation, still contained within the preset constraint space. Genuine existential freedom is precisely what cannot be architectured.

This paradox is irresolvable. But it is not a bug you need to fix. It is a feature you need to internalize.

What it means is that any cognitive collaboration architecture can, at best, create the conditions under which rupture becomes more likely. It cannot design the rupture itself. This is analogous to education — good teaching does not engineer the moment a student has an epiphany. It cultivates an environment rich and tense enough that epiphany can emerge on its own. The system can scaffold up to a threshold. But the step across that threshold must be taken by a person, and it cannot be predicted.

Harness Engineering requires a system capability of faithful execution — mirroring cognition for comfort and efficiency. But what cognition actually needs at its growing edge is a system capability of honest discomfort — scaffolding cognition toward friction and conflict, toward deeper thinking. The first makes you faster. The second makes you deeper.

The direction, then, is this: build an evolvable architecture for cognitive collaboration in which humans permanently retain the authority to define values and to make final decisions.

One last thing. An honest audit of everything I have argued.

“AI has not lived.” This claim rests on our incomplete understanding of consciousness and experience. We cannot say with certainty that what happens inside a large language model when it processes information does not constitute some embryonic form of experience. I am inclined to believe it does not, but “inclined to believe” is not proof.

So the force of this essay should not derive from a claim of human uniqueness. It should derive from honesty about uncertainty:

We are not certain whether AI has experience. We are certain that humans do. Until that uncertainty is resolved, the final weight in value judgments should remain on the human side.

This is a pragmatic argument, not a metaphysical one. It does not need to prove that AI can never create. It only needs to point out that while the uncertainty persists, placing the weight of creation with the party we know possesses these capacities is the more prudent choice.

L’homme est condamné à être libre — man is condemned to be free. Freedom is not something you defend once. It is something you choose, again and again. The human position in the age of AI is not maintained by declaration; it is maintained by the active exercise of value judgment in every act of human-machine collaboration. Defense is passive. Exercise is active.

But there is one question that stays with us. Throughout history, the process by which humans renegotiate values has been bloody: wars, revolutions, societies tearing themselves apart. It has taken decades, sometimes centuries. AI, as an amplifier entering this process, may accelerate and scale that disruption far beyond the bandwidth at which human societies can repair themselves.

Can humans renegotiate values fast enough to keep pace with AI’s amplification of conflict?

We don’t have the full answer. But this is precisely the question we’re building around: a cognitive architecture that keeps humans equipped to negotiate, to judge, to choose, even as the system scales beyond what any individual can hold alone.

In an era of accelerating value drift and exponential system capability, standing firm matters more than standing tall.